Lastly, this new feature can be used to diagnose previous troubleshooting sessions. Use Case #3: See Previous Troubleshooting Attempts conf files changes themselves will always show up exactly as they changed in the _configtracker index. conf file since it is interpreted as a default value in the filesystem. For example a change of certain alert values to 24 hours may show up as null (instead of 24h) in the corresponding. Note: UI changes don’t always map 1-to-1 with. In your latest search result, expand the “changes” and “properties” sections to see the new and old values of your alert configurations.Navigate to the “Search” tab and execute the following search: index= “_configtracker” sourcetype=”splunk_configuration_change” data.path = “*nf”.Change the “Trigger Conditions” section from is greater than 14 to is greater than 23.Change the Expires 72 hours option to Expires 56 hours.In the “Frequency dropdown” section, change Run every day to Run every month.In the “Search & Reporting” App, navigate to the “Alerts” tab and on an existing alert click Edit > Edit Alert.Thus, a user changing the configuration settings with an existing alert can find these changes logged in the “_configtracker” index. | table modtime path name prop_name new_value old_valueīelow, you can see an example of how local configuration changes made in the UI are seamlessly translated to the underlying configuration files. Index=_configtracker sourcetype="splunk_configuration_change" data.path=*nf Use Case #1: See Config File Changes in a Simple Table ViewĪ simple table view with the following query can provide a fast way for users to understand what types of file paths, stanzas, and properties are changing within an environment: conf file changes related to the creation, updating, and deletion of. The log files come from configuration_change.log which include. In the Splunk Enterprise Spring 2022 Beta (interested customers can apply here), users have access to a new internal index for configuration file changes called “_configtracker”. These changes have never been natively tracked within Splunk, leading to confused team members and befuddled customer support reps. Add up the myriad of configuration changes that can happen every day and you might encounter realities that are different than expected for any number of reasons. conf files and forget that those changes ever occurred. Unfortunately a side effect of this was that multiple team members could change underlying. And for years, we’ve enabled admins to customize things like system settings, deployment configurations, knowledge objects and saved searches to their hearts’ content.

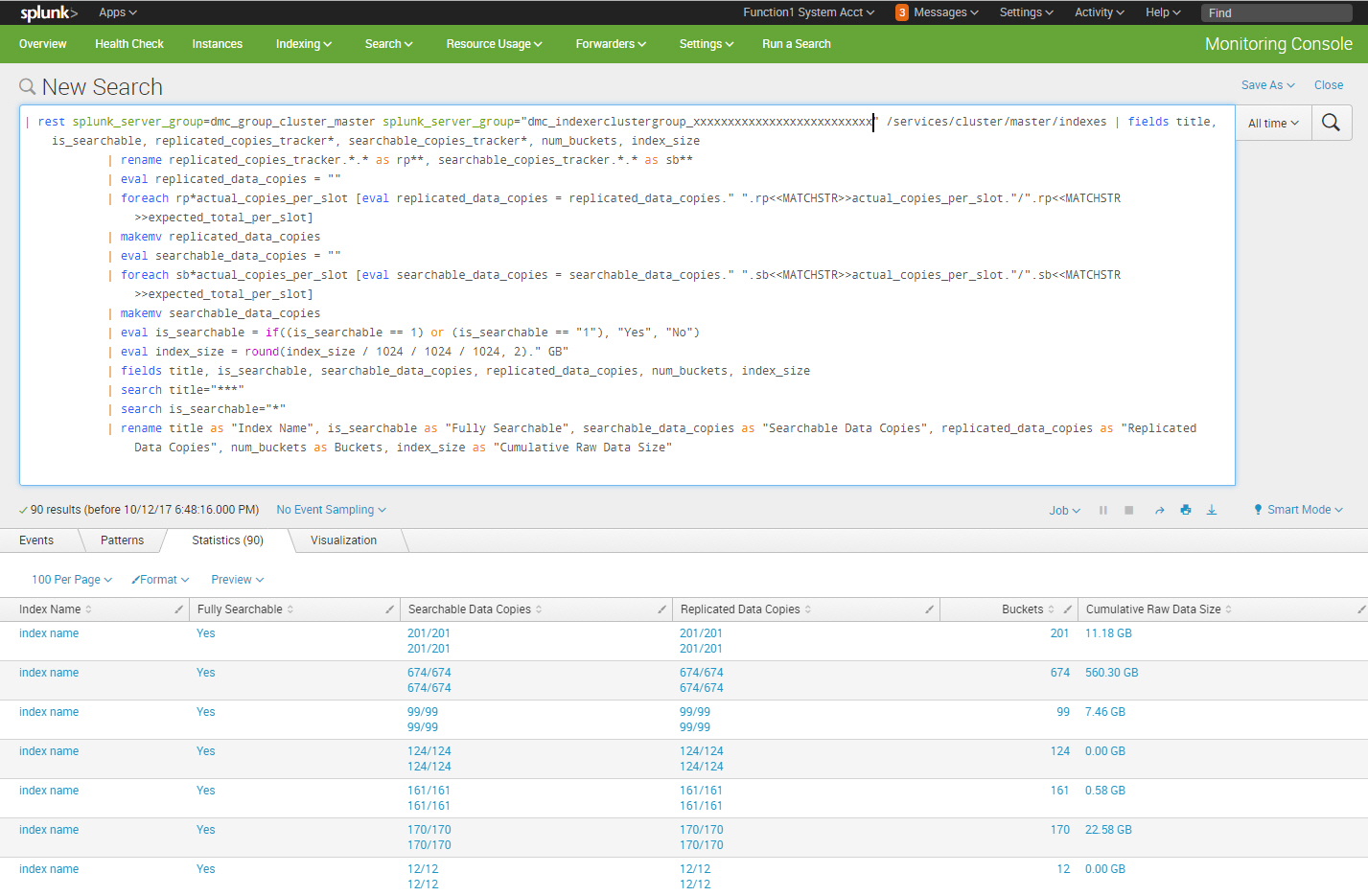

How do I configure the forwarder to parse the output to the log file?ĭETAIL Take Action=> Number of encryption certificates of bes license: įAIL Take Action=> 1.7.6: Actionsite Size Check Actionsite Size CheckįAIL Take Action=> ActionSite Size is too large: ĭETAIL Take Action=> Total Stopped/Expired Action count (more than 30 days old): ]įAIL Take Action=> 1.10.N ote: This feature is now available for Splunk Enterprise customers in the Spring 2022 BETA.įor years customers have leveraged the power of Splunk configuration files to customize their environments with flexibility and precision. The forwarder it taking the entire entry from the script as one event, but I need each line to be an event. The problem is, I think, that a custom python script runs and outputs the results at one time to the log file. I have a log file that Splunk is monitoring.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed